Your Brain Is Not a Computer

What Green Berets, Shakespeare, and the Strait of Hormuz can teach you about real intelligence

“Practical men, who believe themselves to be quite exempt from any intellectual influences, are usually the slaves of some defunct economist.”

- John Maynard Keynes

“The empires of the future are the empires of the mind.”

- Winston Churchill

Three weeks ago, Iran closed the Strait of Hormuz. Not with a naval blockade. Not with mines or anti-ship missiles. With cheap drones, a handful of selective strikes, and a single statement from an IRGC commander: the strait is closed, and any vessel that tries to cross it will be set ablaze.

That was enough.

Insurance companies repriced war-risk premiums overnight. Shipping firms rerouted around the Cape of Good Hope. Within days, tanker traffic through the strait, the passage for 20% of the world’s crude oil, dropped by 97%.

The American response was algorithmic. Send warships. Escort convoys. Apply maximum military pressure until the strait reopens. Identify the problem, apply force, restore the prior state. No NATO ally has joined the coalition. Instead, India, Turkey, China, and Pakistan are negotiating safe passage directly with Tehran. Iran — its supreme leader dead, its military infrastructure degraded — has become the gatekeeper of the world’s most important energy chokepoint, not through force but through narrative.

Countries are asking Iran’s permission, not America’s.

I’ve been thinking about these events through the lens of a book I read recently. Because it explains something the analysts are missing.

A Green Beret walks into a village that wants him dead.

He speaks the language. He carries enough firepower to level every structure within a hundred metres. His mission is to build an alliance with the village elder, a man who has watched foreign soldiers come and go for thirty years and has no reason to trust this one.

The playbook would be to demonstrate capability. Project strength. This is how institutions build influence: prove you are competent, and compliance follows.

The Green Beret does something else. He sits down and tells the elder about his father.

Not a rehearsed story. Not a strategic disclosure. He talks about growing up in a house where things were difficult, about a relationship that was complicated, about a life that had some broken parts. The village elder listens. And then, slowly, begins to talk about his own.

Angus Fletcher tells this story in Primal Intelligence. Fletcher is a professor of story science at Ohio State who spent years embedded with US Special Operations Command. His core claim sounds obvious and rearranges everything once you take it seriously

Your brain is not a computer. It is a story machine.

Computers process rules. The brain does something different, it generates “what if” simulations. It imagines futures that don’t exist. It models other people’s inner lives. It explores many paths simultaneously until one reaches somewhere worth going.

Fletcher calls this storythinking. And his argument is that it’s not a soft skill or an emotional decoration on top of real intelligence. It is intelligence, the original kind, the kind that kept us alive for two million years before anyone invented a spreadsheet.

This matters now because almost every institution you interact with is trying to replace storythinking with algorithms. Not because algorithms are better. Because they’re legible. You can measure them, scale them, put them in a dashboard. You cannot put a Green Beret’s broken-family story in a dashboard. You cannot optimise for the thing that made the village elder start talking.

The thing that actually works keeps getting engineered out of the system.

In the late 1800s, a doctor named William Osler did something radical at Johns Hopkins: he sent medical students to the wards.

Before Osler, students sat in lecture halls and studied cadavers. They learned medicine by mastering rules and applying them. Osler sent first-years to sit with actual patients — people who were sick, frightened, and didn’t present their symptoms in textbook order — and told them one thing: listen.

The students were terrible at diagnosis. No pattern library, no clinical experience. By every metric, less intelligent than their professors.

But they noticed things the professors had stopped noticing. They asked questions that seemed naive and turned out to be the ones nobody had thought to ask, because the experts had built neural pathways so efficient they could execute without thinking. And execution without thinking is the enemy of discovery.

The generation Osler trained produced insulin, antibiotics, and the randomised clinical trial.

Fletcher calls this the paradox of expertise: knowing and learning are opposites. The more automatic your competence, the less available you are to the anomaly that doesn’t fit. And the anomaly is where the next breakthrough lives.

I recognise this in myself. The longer I spend in any domain, the more I pattern-match faster and see less. By year five, you’re running on cached predictions. Efficient, yes. But the kind of efficiency that quietly closes the door on everything you haven’t already understood.

The Special Operations Aviation Regiment had a practice for this. Put a rookie pilot in the cockpit with a real mission. Have the expert co-pilot sit on his hands. The rookie benefits from genuine stakes. The expert benefits more, because the rookie’s unexpected decisions force him into territory the old neural pathways don’t cover. Doing it wrong in new ways teaches more than doing it right in the same old way.

“To be or not to be” is probably the most famous line in English literature, and Fletcher thinks it’s almost universally misread.

We treat it as Hamlet’s weakness, paralysis, overthinking. Fletcher reads it as the opposite. Hamlet’s capacity to hold that contradiction without resolving it prematurely is what makes him resilient.

Modern decision-making culture worships speed, the Green Berets’ doctrine is to move with 70% of the information you wish you had. Fletcher agrees. But there’s a difference between moving through uncertainty and fleeing from it. Hamlet is not frozen. He’s processing, holding contradictions that would destroy a lesser mind until the moment arrives when he can act with full integrity.

Resilience is not bouncing back. Bouncing back means the blow changed nothing, which means you learned nothing. Real resilience is growth that wouldn’t have been possible without the adversity. And growth requires a period of disorientation that looks, from the outside, exactly like weakness.

The brain has two responses to trauma: it breaks, or it integrates. The difference is whether the painful experience gets woven into the ongoing story of your life or walled off from it. Hamlet weaves. Ajax, in Sophocles, walls off, refuses to integrate his shame, and it kills him. Same calibre of mind. Opposite relationship to the story.

Storythinking generates possibility. Algorithmic thinking selects from existing options. In certain critical moments, they are not just different, they are antagonistic.

When you need to execute a known procedure, algorithmic thinking is superior. But when you need to navigate something unprecedente, an unfamiliar village, an anomalous patient, a geopolitical crisis with no historical precedent, the algorithm has nothing to select from. It needs options to exist before it can rank them. Storythinking is what makes them exist in the first place.

This is the quiet disaster Fletcher sees unfolding across institutions. The dashboards and KPIs and decision trees, each one a modest improvement in efficiency, are collectively eliminating the organ that generates the options the frameworks were supposed to choose between. The system gets faster and narrower simultaneously. It handles the expected with increasing precision and the unexpected with increasing brittleness.

Which brings me back to the Strait of Hormuz.

Every Western analyst modelling this crisis is running algorithmic thinking. Probability of blockade × oil price impact = portfolio adjustment. Military force required to reopen × time to deploy = strategic calculus. Reasonable frameworks for reasonable situations.

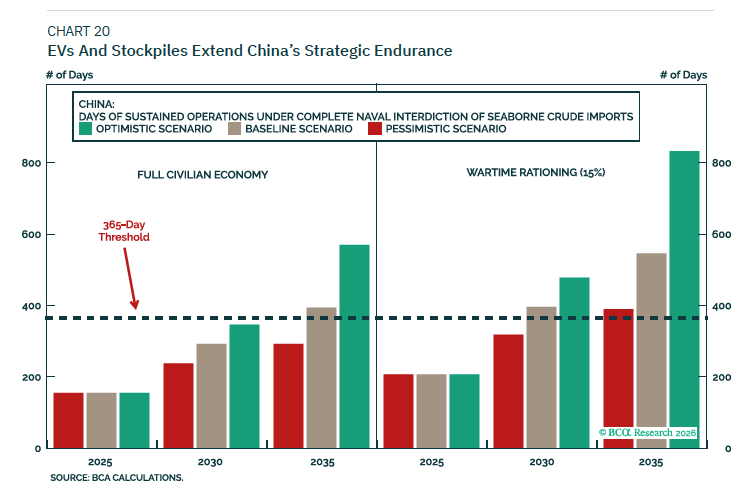

Consider what the best geopolitical research is actually producing right now. Marko Papic at BCA Research, one of the more rigorous macro strategists covering this conflict, argues with considerable evidence that Iran cannot close Hormuz indefinitely. The 1980s Tanker War saw 451 attacks on commercial shipping and oil still flowed. A global naval coalition, probably including China, will pry it open. He offers a specific formula for how long Beijing can sustain operations without Hormuz: Days of Survival = Total Reserves ÷ (Total Oil Demand − Secure Supply). He models the EV fleet penetration curve. He concludes China breaks free of the Hormuz constraint sometime in the next decade.

This is serious analysis, and I don’t dispute the numbers. But notice what the framework cannot hold. It tracks when China will feel strategically capable of acting independently of Hormuz. What it cannot measure is when China will feel narratively permitted to, when the story Beijing tells itself about its own sovereignty shifts enough to translate capability into action. That variable has no column in the spreadsheet.

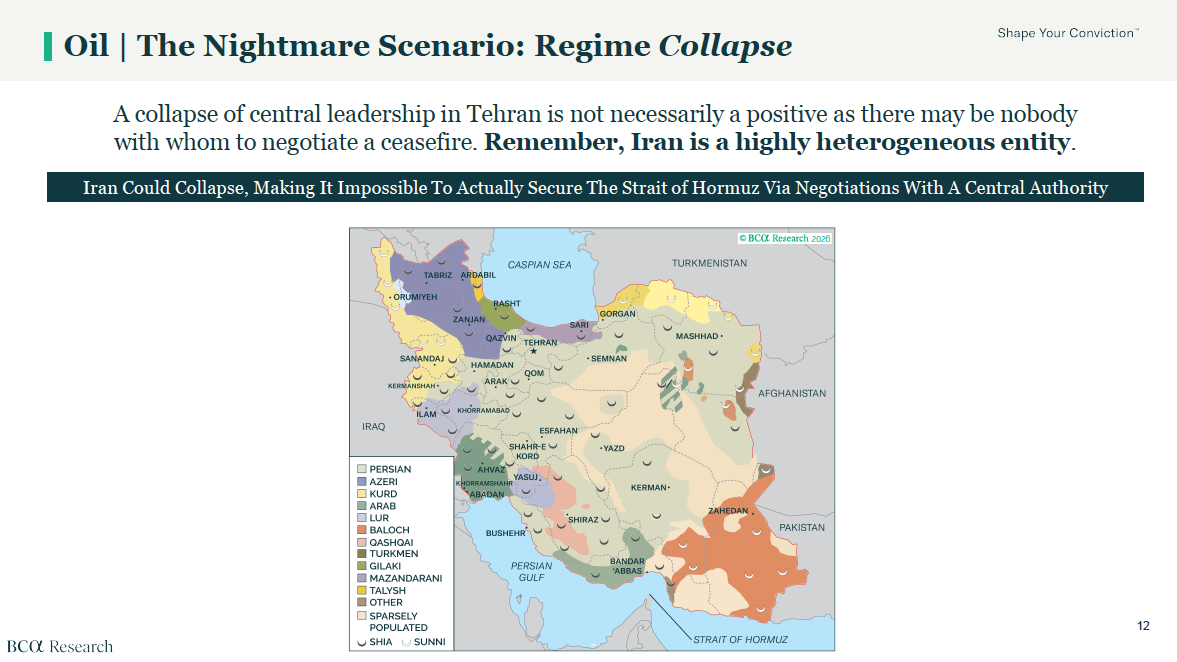

And there is something the algorithm misses entirely. Papic flags his nightmare scenario as Iranian regime collapse, not because it means military defeat, but because it means there is no longer a central authority to negotiate with.

The algorithmic playbook requires a counterpart: apply force, demand compliance, receive surrender. A fragmented Iran, with multiple factions and no unified command, produces a situation where the demand lands and there is simply nobody authorised to accept it. The framework doesn’t fail because it was applied wrongly. It fails because the world stopped being the kind of place where it applies. Storythinking would have anticipated that. It asks: who are the characters, what do they want, and what happens to the story if one of them disappears? The algorithm has no such question.

Iran, meanwhile, is not running a probability model. Iran is telling a story. The story is: we may be weaker militarily, but we decide who passes through our waters. We are the gatekeeper, not the gatekept. And the countries that need our oil will come to us on our terms, not Washington’s.

Hamidreza Azizi, an Iranian scholar of the country’s security apparatus, made a point recently that sharpened this for me: Iran’s war objective is not military. It is narrative. They want to make this conflict so globally costly that no American president will ever green-light it again. They are not fighting to win a battle. They are fighting to change the story that future decision-makers tell themselves about what is possible. That is a storythinking objective operating at civilisational scale, and the algorithmic frameworks modelling the crisis have no category for it.

Bruno Maçães, the geopolitical strategist, observed that American political culture has increasingly lost the capacity to process abstract concepts, institutions, culture, structural power and can now only express victory by eliminating the opposing personality. Kill the leader, declare the win.

This is algorithmic thinking at its most reductive: identify target, apply force, claim victory. The question of what story you’re creating by the application of force, what narrative you’re handing to the other side, what identity you’re reinforcing in the countries watching, doesn’t enter the calculus. It can’t. The framework has no field for it.

And so the US killed Iran’s supreme leader, destroyed military infrastructure, and demanded the strait reopen. The algorithmic prediction: a weakened Iran capitulates. What actually happened: Iran seized the narrative.

Countries that should have rallied behind American power are instead cutting bilateral deals with Tehran, because India, Turkey, and China are asking a narrative question, not an analytical one: what story do we want to be in? The story of an American client helping force a waterway open? Or the story of a sovereign nation with its own relationship to the Gulf?

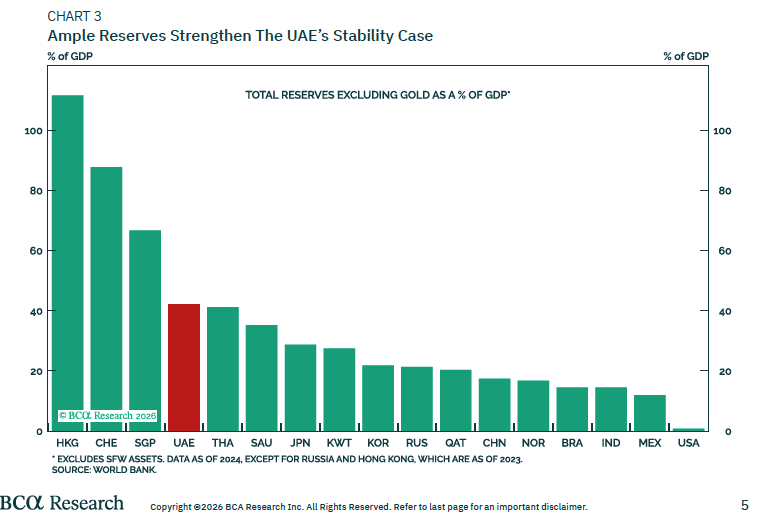

Papic’s sharpest call in this crisis, incidentally, is not a model. It is a story. Western media has declared Dubai finished. He says they’re wrong, because Dubai was never built for Western sensibilities in the first place. Walk through a Dubai shopping mall and you hear Mandarin, Hindi, and Russian as often as English. The UAE is not Singapore-for-Westerners.

It is the financial capital of the Global South, serving capital that is fleeing risks far larger than an Iranian drone. That call doesn’t come from a dataset. It comes from having read the room. It is storythinking dressed in the language of geopolitical analysis. His best work in this crisis is, without knowing it, a demonstration of Fletcher’s thesis.

Maçães also made a point about trust that maps directly onto Fletcher’s Green Beret scene. The US, he argues, has consumed in a single year credibility that took a century to build. No reasonable actor would now trust an American guarantee, you could be assassinated the day after signing the deal. Trust is built through story, through the slow disclosure of who you actually are. It can take years to earn and seconds to destroy. The institutions running this crisis have no mechanism for measuring it, so they act as if it doesn’t exist.

There is one detail from this conflict that illustrates the gap better than anything I could invent. When US and Israeli strikes damaged the Golestan Palace in Tehran, a UNESCO World Heritage site, it appeared on a target assessment as collateral within acceptable parameters. Azizi mentioned, almost in passing, that the Golestan Palace was where he and his wife had their first date. A target list has no field for that. A story does. And the difference between a framework that can hold both facts simultaneously and one that can only hold the first is the difference Fletcher has been describing for three hundred pages.

The algorithm says: apply force, restore equilibrium. The story says: the equilibrium is already gone, and the question is what new arrangement you can live inside.

There are a lot of books about creativity and the brain. Fletcher’s is different.

What he understands is that storythinking is not a technique you implement on Tuesday. It is a willingness to stay in the mess, to let your emotions inform your decisions rather than contaminate them, to trust that the feeling of not-knowing is the condition under which your deepest intelligence operates.

Fear and curiosity, he writes, are the brain’s two primary data channels. The person who suppresses fear is not brave, they’re deaf to the most refined threat-detection system evolution produced. Courage is the capacity to feel fear and move anyway. The vagus nerve, what Fletcher calls the courage nerve, is the biological mechanism. You don’t override fear. You carry it forward.

This maps onto something I have been thinking about more in the context of AI. The most important human capacities are not the cognitive ones. They are the ones that emerge from having a body in a world. From feeling fear, grief, curiosity, shame. From sitting with a village elder and sharing the texture of your actual life. From holding a contradiction that would be easy to resolve and choosing not to, because the resolution would be a lie.

The brain is not a computer. It is a two-million-year-old instrument for navigating uncertainty through story. The more powerful our actual computers become, and the more volatile the world those computers are modelling, the more essential it is to understand the difference.

Fletcher’s prescription is simple. Saturate yourself in human experience. Shakespeare, above all, not because the plays are prestigious but because they are a compendium of every human situation compressed into a form the brain absorbs through story. King Lear for when authority collapses. Othello for when trust is weaponised. The Tempest for when you have to let go of power and still find out who you are.

And when you walk into a room where you’re supposed to perform competence, consider telling the truth about your life instead. It worked for the Green Beret. It’s working for Iran, in the most cynical and consequential way imaginable, a weakened regime telling a story of sovereignty that is reorganising global energy flows more effectively than a carrier strike group.

The best analysts in this crisis know this too, even when they don’t say so. Their sharpest calls come not from the model but from reading the room, from asking what story the characters are living inside, and whether the world is still the kind of place where the old frameworks apply.

The Green Beret probably doesn’t think of himself as a storythinker. He thinks of himself as someone who got lucky that day, that the elder was willing to talk, that something connected, that it worked.

That’s probably the most important part. The people who are best at this don’t know they’re doing it. They’re just paying attention.

Which is, when you think about it, the whole thing.

It might work for you too. Not because vulnerability is a strategy. Because it’s the only thing that has ever made another person trust you enough to tell you what’s actually going on.

Essays like this take time to write and cost nothing to share. If it earned a few minutes of yours, it might earn someone else's too.

True intelligence lies not just in optimization and analysis, but in the ability to shape narratives, embrace ambiguity, and generate entirely new possibilities

You will never find the path if you don’t get lost, because being lost is finding the path. - Ancient African proverb.